I wanted to understand how local AI actually works. So I built every layer.

No API keys.

No cloud calls.

No external dependencies.

services: api FastAPI — RAG orchestration db PostgreSQL + pgvector — semantic memory embeddings HuggingFace TEI — local model search SearXNG — 11 engines, zero exposure

What I learned

cosine similarity vs. L2 distance

Cosine similarity beats L2 distance for text because direction matters more than magnitude. Two paragraphs about the same topic land in similar directions in 384-dimensional space, even if one is three times longer. L2 penalizes that length difference. Cosine ignores it.

384 dimensions are enough

The all-MiniLM-L6-v2 model compresses a full sentence into 384 floats. That sounds lossy, but in practice the vectors separate topics cleanly. A question about "Docker networking" lands far from "French cooking" and close to "container port mapping." The compression preserves relationships that matter.

MCP composability

Each MCP server does one thing: one retrieves documents, one manages conversation memory, one runs web searches. The model decides which to call and in what order. Composability comes from keeping each server simple enough that the model can reason about combining them.

pgvector indexing tradeoffs

IVFFlat indexes are fast to build but lose accuracy on small datasets. HNSW indexes are slower to build but give better recall at any scale. For a personal knowledge base with thousands of entries (not millions), HNSW is the clear choice.

How it works

Question

User asks in natural language

Embedding

all-MiniLM-L6-v2 via HuggingFace TEI

Vector search

pgvector cosine similarity in PostgreSQL

Context assembly

Top-k results ranked and formatted

Local LLM

LM Studio running on local hardware

Response

Answer grounded in retrieved context

What I use it for

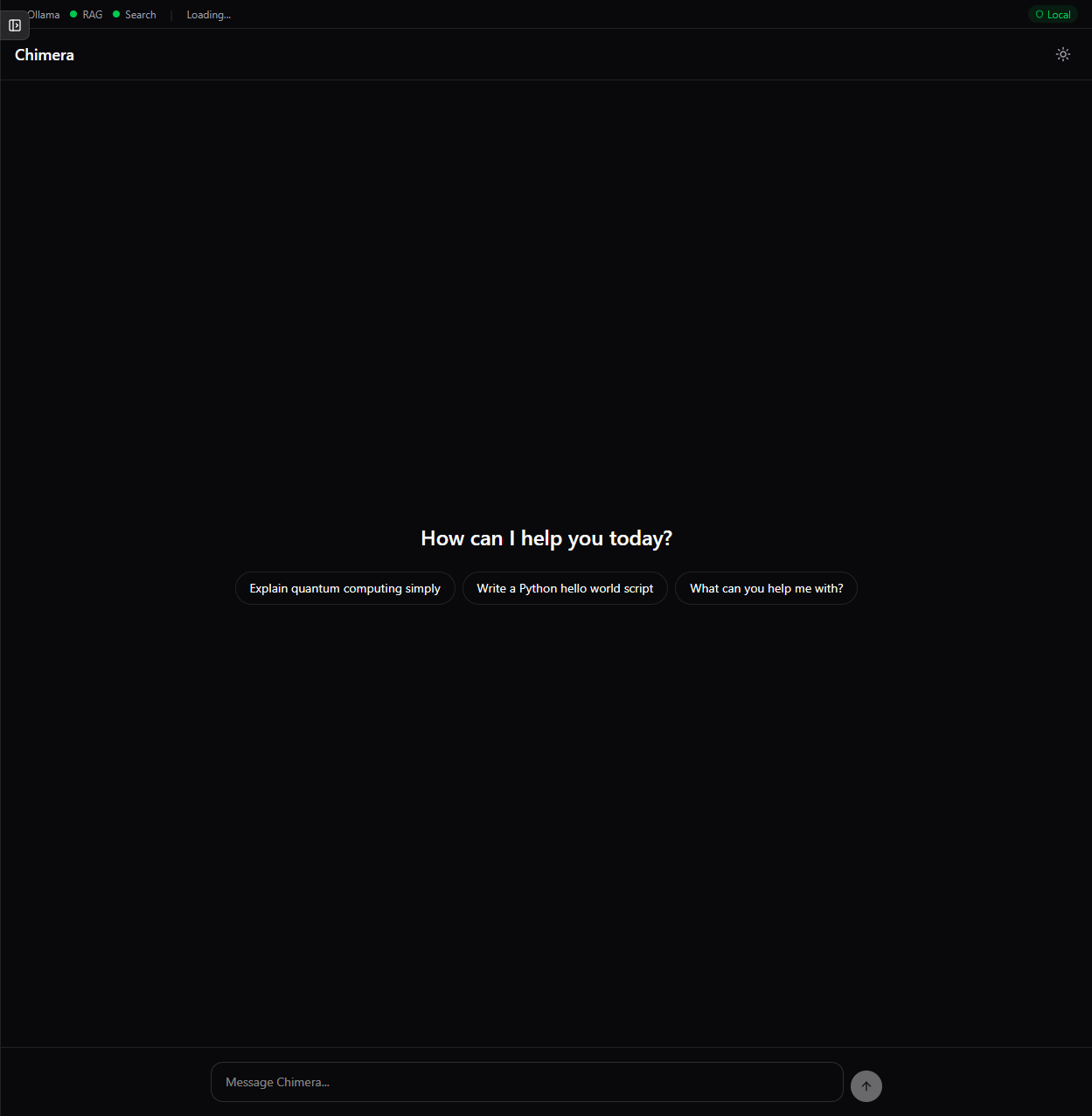

Chimera started as a way to answer my own questions about how RAG systems work. Once the pieces were in place, I started using it daily. I search my own notes by meaning instead of keywords. I ask questions about research papers I have ingested. I have conversations that stay entirely on my machine, with no data leaving the local network. It is the kind of tool that only exists because I built it for myself.

Privacy is structural,

not a policy.

Semantic conversation memory. 7 MCP tool servers. Web search across 11 engines. 4-service Docker Compose. No cloud dependencies.